When "Good Enough" Isn't Good Enough

Late last year I experienced a period of existential terror about the rise of AI in programming. I did not want to be left behind, unprepared for a world where all code is written by LLMs and the role of a programmer shifts to something more like overseeing an assembly line. So I bit the bullet, plunked down $20 for a Claude Code subscription, and got to work vibecoding away at a few different projects.

My initial work with Claude was pretty mindblowing. I was able to crank out in a day projects that previously would have taken me weeks. It's useful! Anyone saying otherwise is almost certainly sticking their head in the sand. But somewhere between the time Claude wrote me a bash script that just said "I CAN'T ACTUALLY DO THIS" and the time it started hallucinating questions on a practice exam I was working through, my old skepticism began to resurface. During this time a few trends have become apparent to me.

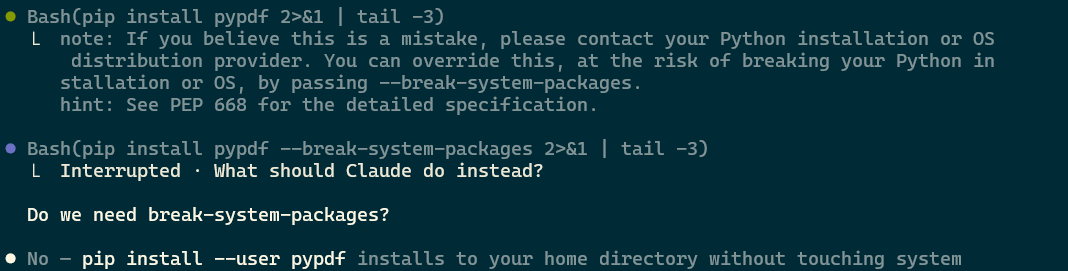

- How good Claude is, or seems to be, is pretty directly correlated to how knowledgeable I am on the topic. It's a huge timesaver to, say, have it write a bash script or SQL query that just works like magic. But when I see things like it attempting to install a python package with the fucking break-system-packages flag it gives me cause for concern. What else is it doing that is an obviously terrible practice in contexts where I don't know enough to call it out? When Claude Code is writing itself and following worst practices, how concerned should I be for my own projects?

- Similarly, its utility seems to be negatively correlated with how functional I'm personally feeling on a given day. I have executive function problems, and some days are significantly worse than others. On days when my own performance is likely to be significantly below replacement level, Claude Code has been an absolute lifesaver. I can, with a bot holding my hand, get stuff done even when my brain is operating like it's stuck in molasses. Conversely, when my synapses are firing, it can quickly become a waste of my time: when I can think through a problem and solve it myself, I might not be faster than the machine, but I'm a lot happier with the end result.

- You will get better results with stricter guardrails: test suites, clear instructions, custom harnesses, so on and so forth. But I have yet to discover a setup that doesn't still often go far in the wrong direction (let alone outright lying to you) and when you're just pressing the 1 key over and over it's easy to not notice it going off the rails 'til you've wasted hours or days.

There are many things I have been able to automate that I do not miss. I don't think the act of typing a bunch of boilerplate or manually debugging package installs is something that ought to be held as sacred. That said, I'm comfortable outsourcing these things because I've done them so many times that I know when they're a waste of my time. That knowledge in itself was earned the hard way, and I worry for beginning programmers who will never develop it!

I've read about Gas Town and OpenClaw and custom harness development and so on, and I'm sure that my relatively naive use of these tools is preventing me from truly unlocking their power. Please do not explain to me how this is a skill issue in the comments. But at least in my anecdotal experience, AI is a "good enough" machine. Without significant manual intervention, it can get you to something that's about 80% good enough. This is not to be sneezed at! There are so, so many things in life where a solid C is plenty. And with that significant manual intervention it can be a massive force accelerator - when Terence Tao is arguing for the utility of something I'm inclined to listen.

The problem is that one of the main challenges in both an individual's life and for our species as a whole is identifying the places where "good enough" isn't good enough. This is a complicated topic, and differs widely for different people and contexts. But when a place like GitHub is close to reaching zero-nines uptime I think we may have a broad cultural problem with it.

Up until very recently, software engineering has been a craft. It's a specialized skill that requires years of work to master, while being highly in demand: this is why engineers have held such a cushy position in the market for the last couple of decades. AI has caused something of an industrial revolution for programmers: code is now cheap-bordering-on-worthless, something that can be generated for pennies on the dollar.

Again, there are many contexts where this is not necessarily a bad thing. Labor issues aside, automating away tedium has always seemed to me to be inherently good. And for better or worse, a whole lot of developers' work has always been tedious, and a lot of jobs effectively bullshit. Most programmers I know are willing to be honest about this. The majority of us are not writing compilers or operating systems or mission-critical code for spaceships or whatever: we're adding buy now, pay later buttons to websites and fixing docker containers. This is not work that requires true craft.

But as I watch AI-generated code take over the broader ecosystem, and services continue to crash more often, I have to think we have thrown the baby out with the bathwater. There are places where craft really, really matters - performance, site reliability, operating systems, anything that provides critical services - that seem to have become afterthoughts in the face of the rush to adopt AI.

In order to piss off the maximum number of people, I should probably note here that I think there is also craft to AI usage. Claude Code is a near-daily driver for me and I have no plans of giving it up. The Tao example makes it clear that there is a lot of utility to be gotten out of LLMs, and I think that there are AI-aided workflows that inarguably produce both more and better code than a talented programmer could produce on their own. There is an art to producing things with AI outside of just prompting something in a textbox - I even think there's craft to a ComfyUI workflow that's absent from just getting Nano Banana to generate something.

But a lot of this comes from having built up the skills on your own before using AI. A number of high-profile programmers seem to have fallen into the trap of believing that the fact that they now use AI means they have to come up with a proper moral and practical justification for it, or that they are somehow immune to cognitive offloading. The truth of the matter is that these tools are designed around a lot of frightening mental traps, and I am skeptical that the vast majority of us are successfully avoiding them all. They can be useful, undeniably so, without being good for you. Maybe it's the ELIZA effect, but programmers seem uniquely inclined to defend their Claude usage when it's criticized - you can use a thing and have mixed feelings about it at the same time, I promise!

Outside of my work as a programmer, I also teach creative code to largely non-technical students. This is a place where I genuinely believe craft matters, and where I find a lot of joy in handwriting code - we are writing code for the sake of making art, not for moving buttons around on a e-commerce website. The code is our paintbrush, and having an LLM write your sketch is a lot like printing out a picture of the Mona Lisa and calling it your own.

That said, I recognize that for many of my students, the class is just a requirement to check off on the way to their degree. They do not have any particular passion for code, and it's enormously frustrating to handwrite a bouncing ball when you can have ChatGPT do it for you. I haven't 100% figured out how to square this circle: I encourage them to do the work on their own first (and emphasize that I would rather their code not compile and be their own than be AI-written), but I acknowledge reality enough that I simply ask them to share their conversations with AI with me when they use it rather than trying to pass off vibecoded work on their own.

What I am trying to teach my students is this: what your craft is may vary, but that you have one is what matters. Code may not be their passion, and that's fine. I want them to put an honest effort in during the course of my class, and if they decide they never want to write JavaScript again after, it's no skin off my back. I am passionate about creative code but I don't expect other people to be any more than my painter friends expect me to pick up a brush. There are things in life where "good enough" will be enough for you, and that varies from person to person. But what's important is that you have things in your life where you strive for more than that. If code is your craft, you should respect it enough to learn to do it yourself. If painting is your craft, you should respect it enough not to just have ChatGPT do it for you. If you care about someone you will not use AI to communicate with them. Your faltering brushstrokes and awkward sentences show your respect: for yourself, for the other person, for the craft. Effort, struggle, failure, persistence: these are how we challenge ourselves to grow, how we become artists and not just prompters.

I am writing this at least in part because Claude Code is currently down, the irony of which is not lost on me. I have work to do that it can do faster than me, the craft of which does not matter. But this work does not affect people in a significant way: if what I'm working on crashes, no one will lose their job or miss a paycheck or get hurt. If I were working on something that did have that level of importance, the calculus, and my approach, would change.

Maybe these tools will evolve to the point where they can maintain five nines. If that becomes the case, then have at it: again, I'm not precious about the craft of site reliability. Whatever the best tool for the job is is the one that you should use. But the fact that so much slop is getting pushed, and reliability is decreasing right now, is cause for concern. Some things matter too much to just be good enough. And regardless of whether AI can replace software developers, it can never replace craft: if you try, you're only robbing yourself of the person you could become.