Thou shalt not make a machine in the likeness of a human mind

Today I am questioning even my minimal attempts to give the benefit of the doubt to AI. A thing is ultimately what it does and dear god there is so little good coming out of this technology right now and so much bad.

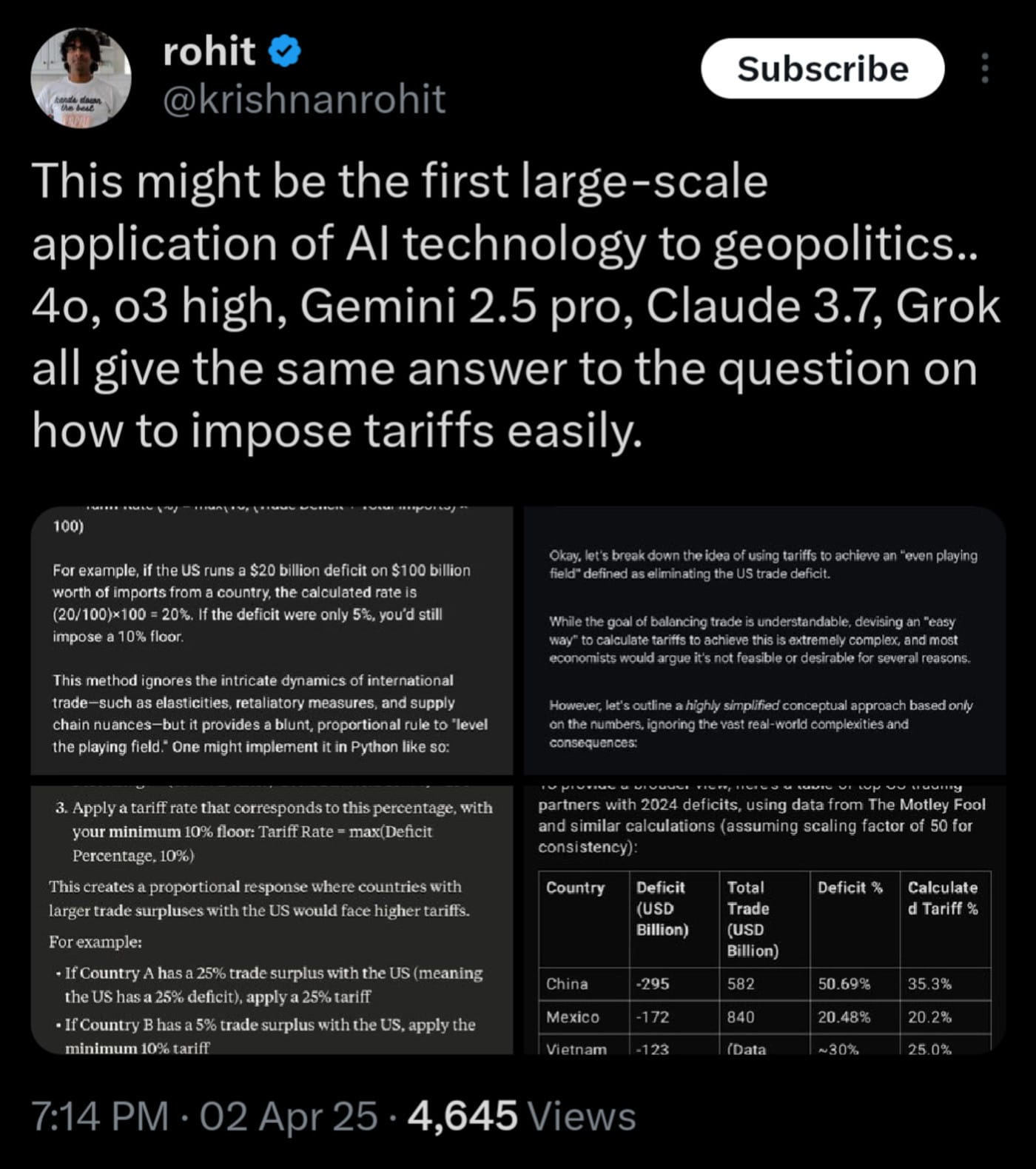

As far as anyone can tell, the tariffs announced yesterday (which will, if upheld, most likely result in a recession at best) were literally just calculated based on asking a chatbot how to eliminate trade deficits. Gemini even prefaced its response with "this is a super bad idea, but if you want to, here's how you'd do it". Of course one side effect of putting the machine that makes you illiterate out into the world is people don't even pay that much attention to what it's saying. Why would you when you can just use the output without having to think about it?

There are so many reasons things are bad right now, and you can't really disentangle any one of them from all the others. A lot of my counters to knee-jerk AI criticism is that the problem isn't the technology, it's the structural and economic incentives for its use. I do stand by that - ultimately AI is just Big Math and I don't think math is inherently bad or good - but it seems increasingly clear that quibbling about that distinction doesn't matter a whole lot. This thing exists in the enormously stupid world we currently have and so now it is in part responsible for crashing the whole damn economy.

I can never seem to find the screenshot but I think a lot about a post I saw to the effect of "how bad of a world do we have to live in where the prospect of robots doing work for us is a bad thing". If the vast wealth of this world were apportioned fairly and we didn't live in a bizarre productivity death cult it would be kind of nice to think of all the email jobs getting automated away. If we taught kids that the value of art is in struggling, in the process, in the inherent humanity of it all, maybe we wouldn't be stuck in endless debates over Ghiblifying things. If more people were media literate (or just literate, I guess) then maybe we wouldn't have to worry about the hallucinations and misinformation so much. If we prioritized sustainability and local software over "model go brrr" maybe the environmental impact wouldn't be so concerning.

These are a lot of big ifs that probably aren't getting addressed anytime soon. While I like imagining what this world could look like I understand why a lot of people have shortened the thought process to "this thing is bad". You're right! It's mostly bad! And humanity is too stupid to handle the fact that it exists! I guess the only hope is that once the dust of the next three years settles we get some sort of Butlerian Jihad (or at least some sort of substantive legislation on when and where it's appropriate to apply AI rather than just letting anyone release anything at any time). Maybe in a better world this tech could be good but we have to build that world first.